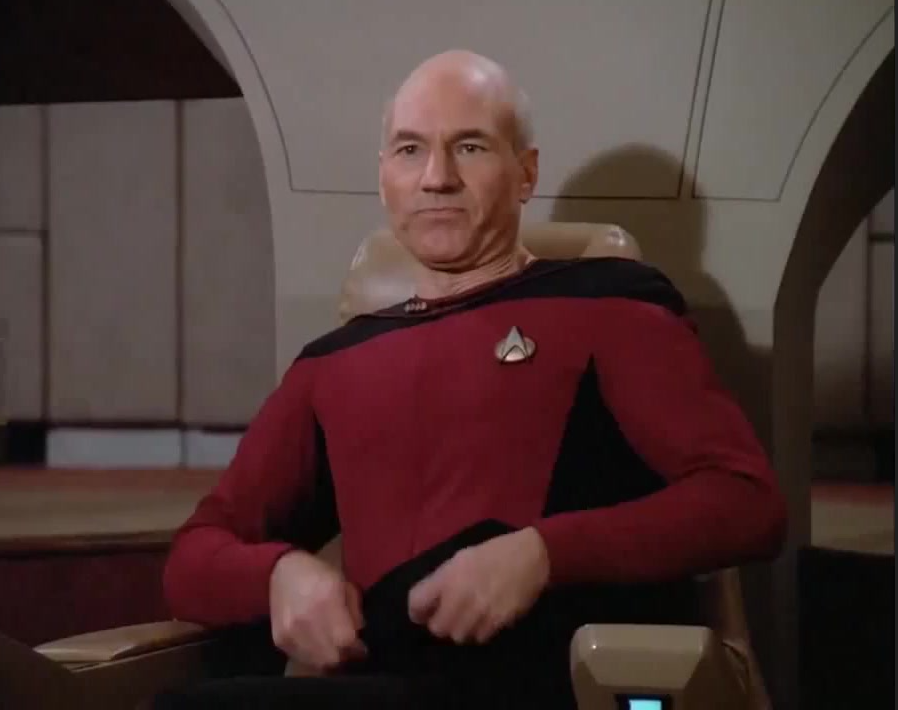

That’s how I feel when people complain about 4k only being 30fps on PS5.

I laugh because my 1080p tv lets the PS5 output at like 800fps.

but the ps5 is still limited by 30 fps even on 1080p

No, the PS5 can output higher FPS at 1080p.

What you might be thinking of is refresh rate, which yeah, even if the PS5 was doing 1080p/60fps, if you for some reason have a 1080p/30hz TV, you won’t be able to see anything above 30fps.

My 120 fps on ps5 1080 in front of me says that your comment is mistaken.

The fact it can output a 120Hz signal doesn’t mean the processor is making every frame. Many AAA games will be performing at well under 120fps especially in scenes with lots of action.

It’s not limited to 30fps like the other poster suggested though, I think most devs try to maintain at least 60fps.

Unlike Bethesda, who locks their brand new AAA games with terrible graphics at 30 fps, and that if you don’t feel that the game is responsive and butter smooth, then you’re simply wrong.

I’d almost bet money that Todd has never played a game at 60 fps or higher.

iirc that more has to do with lazy coding of their physics system with the

GamebryoCreation engine. From what I understand, the “correct” way for physics to work is more or less locked at 60fps or less, which is why in Skyrim you can have stuff flip out if you run it above 60fps and can even get stuck on random ledges and edges.There are use cases for tying things to framerate, like every fighting game for example is basically made to be run at 60fps specifically - no more and no less.

This used to be the way that game engines were coded because it was the easiest way to do things like tick rates well, but like with pretty much all things Bethesda, they never bothered to try to keep up with the times.

There’s some hilarious footage out there of this in action with the first Dark Souls, which had its frame rate locked at I believe 30fps and its tick rate tied to that. A popular PC mod unlocked the frame rate, and at higher frame rates stuff like poison can tick so fast that it can kill you before you can react.

That kid was about as cool as kids could be back then. I wonder what he’s up to today.

https://knowyourmeme.com/memes/brent-rambo

Work(s/ed?) for Sony as of 2013 and worked on Planetside 2. Pretty cool!

Work(s/ed?) for Sony as of 2013

Funny turn of events considering the meme

Neat!

Jokes on you – I’m still using the last TV I bought in 2005. It has 2 HDMI ports and supports 1080i!

I miss this the most, older tv models would have like over 30 ports to connect anything you wanted. All newer models just have like 1 HDMI connection if even.

To add these older screens last. New stuff just dies after a few years, or gets killed with a firmware upgrade.

PSA: Don’t connect your “smart” appliances to the internet fokes.

Is that a joke? My old TV has 3 and that’s the only reason I can still use it. 2 of them broke over the years.

Depends on the TV.

Many older mid to high end models had 4+ ports and it sucks you can rarely find a new one with 4 anymore.

My circa 2008 Sony Bravia has 6 HDMI ports that all still work.

I’ve got 4 HDMI 4k 120hz ports on my LG…

We had an older Hitachi tv with 4 HDMI plus component plus RCA input and 4 different options for audio input.

New Samsung TV. 2 HDMI, that’s it. One is ARC which is the only audio interface besides TOSLINK so really theres effectively 1 HDMI to use.

But of course all the lovely

spywaresmart features more than make up for it.My Smart TV made itself dumb when its built-in wifi just died one day. No loss.

Are you telling me there are modern TVs with only 1 HDMI port??

I was curious I so went and browsed some budget TVs on Walmart’s website. Even the no-name budget ones all had 3 HDMI. Maybe if it’s meant to be a monitor instead of a living room TV but I just looked at living room style TVs.

Thanks for putting in the work. People like you make the internet a better place

i feel like the only way youd get one with a single HDMI port are like models that were built specifically for black friday (to maximize profit, by cuting out features)

The performance difference between 1080p and 720p on my computer makes me really question if 4k is worth it. My computer isn’t very good because it has an APU and it’s actually shocking what will run on it at low res. If I had a GPU that could run 4k I’d just use 1080p and have 120fps all the time.

Tldr: Higher resolutions afford greater screen sizes and closer viewing distances

There’s a treadmill effect when it comes to higher resolutions

You don’t mind the resolution you’re used to. When you upgrade the higher resolution will be nicer but then you’ll get used to it again and it doesn’t really improve the experience

The reason to upgrade to a higher resolution is because you want bigger screens

If you want a TV for a monitor, for instance, you’ll want 4k because you’re close enough that you’ll be and to SEE the pixels otherwise.

As long as don’t know that there is anything better you will love 1080p. Once you have seen 2k you don’t want to switch back. Especially on bigger screens.

On the TV I like 1080p still. I remember the old CRT TVs with just bad resolution. In comparison 1080 is a dream.

However if the video is that high in quality you will like 4k on a big TV even more. But if the movie is only 720p (like most DVDs or streaming Services) then 4k is worse than 1080p you need some upscaling in order to have a clear image now.

1440p is the sweet spot. Very affordable these days to hit high FPS at 1440 including the monitors you need to drive it.

1080@120 is definitely low budget tier at this point.

Check out the PC Builder YouTube channel. Guy is great at talking gaming PC builds, prices, performance.

I was using an old plasma screen from around 2008/9 for a while until my girlfriend’s sister’s exboyfriend stole it. Their dad gifted me a 55in 4k tv that wasn’t bid on at an auction he was running. That plasma is probably gonna burn down Shithead’s place at some point, it was pretty sketchy.

It’s funny that we got to retina displays, which were supposed to be the highest resolution you’d ever need for the form factor, and then manufacturers just kept making higher and higher resolutions anyway because Number Go Up. I saw my first 8K laptop around this time and the only notable difference was that the default font size was unreadable.

I love to see the average iPhone fan (wastes $1000 every year) meet you

It’s so funny to me, for a few hundred more you can get an android that unfolds into a tablet lol if you’re going to drop a grand on a phone why not spend a little more and get something fresh

Credit where it’s due, since the post was about using old devices: iPhones have consistently had some of the longest software support in the industry.

good point, I feel like OP would have a iPhone due to this

bro i just want full raytracing-

This will either require AMD to go hard on ray tracing or for console manufacturers to get their video hardware from Nvidia, which will be far more expensive.

Though after some brief searching, my literal terminology may apply to AMD’s strategy: https://www.pcgamesn.com/amd/rdna-5-ray-tracing

Think we’re still a few gens from that.

Don’t worry. Either the PS9 won’t need a screen or we can sue for false advertising. https://youtu.be/iwhPkBHLdqE?feature=shared

Resolution =/= graphics

Televisions are one if the few things that have gotten cheaper and better these last 20 years. Treat yourself and upgrade.

I’ve been in stores which have demonstration 8K TVs.

Very impressive.

I’m still fine with my 720p and 1080p TVs. I’ve never once felt like I’ve missed out on something I was watching which I wouldn’t have if the resolution was higher and that’s really all I care about.

I think the impressive is likely more to do with other facets than the resolution. Without putting your face up to the glass, you won’t be able to discern a difference, the human visual acuity limits just don’t get that high at a normal distance of a couple of meters or more.

I’d rather have a 1080P plasma than most cheap 4K LCDs. The demonstrators are likely OLED which mean supremely granular conrol of both color and brightness, like plasma used to be. Even nice LCDs have granular backlighting, sometimes with something like a 1920x1080 array of backlight to be close enough to OLED in terms of brightness control.

I have a 4k tv with backlighting that matches the screen. When I take magic mushrooms and watch it I can see god

Except they turned into trash boxes in the last couple of years. Everything is a smart TV with ad potential and functionality that will eventually be unsupported. I’m holding onto my dumb TVs as long as I can.

We’ve got a pair of LG C1 OLEDs in the house, and the best thing we did was remove any network access whatsoever. Everything is now handled through Apple TVs (for AirPlay, Handoff etc.), but literally any decent media device or console would be an upgrade on what manufacturers bundle in.

well you can just not connect it to the internet and still have some extra features.

also if it’s an android tv, it’s probably fine (unless you have one with the new google tv dashboard)

these usually don’t come with ads or anything except regular google android tracking, and you can just unpin google play movies or whatnot.look up “commercial displays” or “commercial tvs” when the time comes.

“Monitor” is another. Not just for PC monitors.

Yup. Those cheap TV’s are being subsidized by advertisements that are built right in. If you don’t need the smart functionality, skip connecting it to the Internet. (If you can. Looking at you Roku TV’s!)

But be careful of the “smart” ones. If you have a “dumb” one that is working fine, keep it. I changed mine last year and I don’t like the new “smart” one. IDGAF about Netflix and Amazon Prime buttons or apps. And now I’m stuck with a TV that boots. All I want is to use the HDMI input but the TV has to be “on” all the times because it runs android. So if I unplug the TV, it has to boot an entire operating system before it can show you the HDMI input.

I don’t use any “smart” feature and I would very much have preferred to buy a “dumb” TV but “smart” ones are actually cheaper now.

Same for my parents. They use OTA with an antenna and their new smart TV has to boot into the tuner mode instead of just… showing TV. Being boomers they are confused as to why a TV boots into a menu where they have to select TV again to use it.

New TVs may be cheap, but it’s because of the “smart” “spying” function, and they are so annoying. I really don’t like them.

Can’t speak for your TV, but mine takes all of 16 seconds to boot up into the HDMI input from the moment I plug it in, and there’s a setting to change the default input when it powers on. I use two HDMI ports so I have it default to the last input, but I have the option to tell it to default to the home screen, a particular HDMI port, the AV ports, or antenna

Not a fan of the remote though. I don’t have any of these streaming services, and more importantly I’ll be dead and gone before I let this screen connect to the Internet

Yeah the bootup kills me. I got lucky that my current tv doesn’t do it. But man the last one I had took forever to turn on. It’s stupid.

Why give up a perfectly usable TV?

Why get a new pair of glasses when your prescription increases? My old glasses are still perfectly usable.

Because your old glasses aren’t perfectly usable… Not the best example.

Honestly don’t see the necessity. I’ve had the same computer monitor for 17 years.

Senseless tech lust

Or maybe most people that sit in front of a monitor all day are working and can benefit from a sharper image and more real estate. I work in tech and end up needing a lot of windows and terminals on the screen at once - upgrading from 1080p to 1440p was a game changer for productivity.

Senseful tech lust. I get it too

1050p 16:10, 1200p 16:10, or 1200p 4:3?

Just a normal 1080p 16:9. Wish I got a 16:10.

4k is the reasonable limit, combined with 120 FPS or so. Beyond that, the returns are extremely diminished and aren’t worth truly considering.

8k makes sense in the era of VR I guess. But for a screen? Meh

Even that’s a big stretch, haha.

The new Apple Vision Pro uses 2 4K screens to achieve almost perfect vision, so it’s not that big of a stretch.

4K id agree with, but going from 120 to 240fps is notable

8k is twice as big as 4k so it would be twice as good. Thanks for coming to my ted talk

That would sure be something if it was noticeably twice as good, haha.

My 16k monitor is noticeably twice as good as a 4k one /s

But it got 4 times the pixels, so 4 times as pixely!

8k is 4 4k tvs, so 4 times as good?

480720108014404k is as much as anyone’s gonna need, the next highest thing doesn’t look that much betterThere are legitimately diminishing returns, realistically I would say 1080p would be fine to keep at max, but 4k really is the sweet spot. Eventually, there is a physical limit.

I fully agree, but I also try to keep aware of when I’m repeating patterns. I thought the same thing about 1080p that I do about 4k, and I want to be aware that I could be wrong again

Yep, I’m aware of it too, the biggest thing for me is that we know we are much closer to physical limitations now than we ever were before. I believe efficiency is going to be the focus, and perhaps energy consumption will be focused on more than raw performance gains outside of sound computing practices.

Once we hit that theoretical ceiling on the hardware level, performance will likely be gained at the software level, with more efficient and clean code.

I can’t really imagine being close enough to any screen where I need more than 1080p. I’m sitting across the room, not pressing my face against the glass.

A few years ago, I got a good deal on a 4K projector and setup a 135" screen on the wall. The lamp stopped working and I’ve put off replacing it. You know what didn’t stop working? The 10+ year old Haier 1080p TV with a ding in the screen and the two cinder blocks that currently keep it from sliding across the living room floor.

The lamp stopped working and I’ve put off replacing it.

If you still have it, do it. Replacing the lamp on a projector is incredibly easy and only takes like 15 minutes.

If you only order the bulb without casing it’s also very cheap.

Yep I feel the same. I love how old stuff seem to last longer and longer and the new stuff breaks just out of the blue.

Why does it slide across the floor? Do you live on a boat?

I wish. It’s sitting on the floor and there’s a rug, so the cinder blocks are in front of it at the corners. Now my bed is a little more saggy. I might need some new furniture.

I still can’t visualise the problem. Do you have an ms paint diagram?

Yes, of course: https://imgur.com/a/24sk2bl

You’re awesome. Thanks for that! I think I get it now. Although having the cinder blocks obscure the corners would drive me insane. I need to see every pixel!

I’ve managed to set it up so that the blocks are only covering the frame–nothing blocked. Just enough to hold it up. It’s not too bad for the time being.

I have more questions after looking at the drawing.

Why does the TV slide?

Because it’s a flat screen without an appropriate stand or mount and the bottom is a smooth plastic, which, when combined with the rug, causes the TV to want to fall onto its back, thus the cinder blocks.

Have you ever stubbed your toes on the cinder blocks?

Damn, a color-coded key and everything! I can see you are a master of MS Paint diagrams.