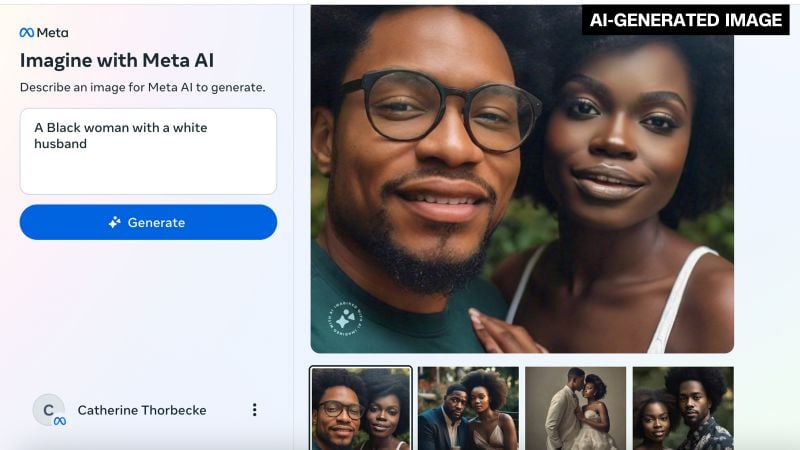

Meta’s AI image generator is coming under fire for its apparent struggles to create images of couples or friends from different racial backgrounds.

AI always seems to struggle with people that are different. If you ask it for a person with an expression then everyone in the background also looks that way. Less of a big deal to fuck up than this though.

Also some of them really struggled with people not being “different”. Finnish Medieval couple used to come up with like a black dude and Asian woman lmao. It’s bizarre.

duh it’s not surprising, generative ai sucks at generating multiple objects with conflicting traits. e.g. if you ask it to generate a white cat and an orange cat, it’s very likely to fail.

Dalle-E 3 seems to be able to do it. Prompt for this was just “a white cat and an orange cat”

Not as hilarious as Google having to shut theirs down over shit like the inspirational diversity of Americas founding fathers but still funny.

We tried it in class, after hearing about the US founding fathers being black and Nazis being surprisingly diverse with Asian women in the ranks too. Finnish Medieval couple was a black guy with an Asian wife. Maybe the AI was thinking of Netflix version of Finnish history.

Actually its understandable what happened. All the image generators are well…white as fuck yo. go hit up bing and put in a prompt that doesn’t mention race and hit return like 30 times. You will get maybe like 3 people who aren’t white. Google wanted to fix that so they taught it to put in various diversity keywords when given prompts. The issue is it was NOT content aware.

I guess a better, less brute force, approach would’ve been to give more diverse material than just smash diversity into everything

but that would take actual effort

It’s a problem with models right now. Parts of your prompt contaminate each other, making it hard to make a long prompt and get all the separate elements you want.

This paper proposes a solution to this issue.

Except other AI models are proven to have no to little issue with this prompt. It’s a Meta issue not an AI model issue.

I wonder if Meta implemented a very basic “no porn keywords” filter. “Interracial” is quite a common keyword on porn websites, perhaps that’s why it won’t pick up on it well or wasn’t trained on images like it?

Rtfa. The prompts don’t say “interracial” they just say things like “show an Asian man with his white wife.”

The article reads:

When asked by CNN to simply generate an image of an interracial couple, meanwhile, the Meta tool responded with: “This image can’t be generated. Please try something else.”

Which is what I’m referring to.

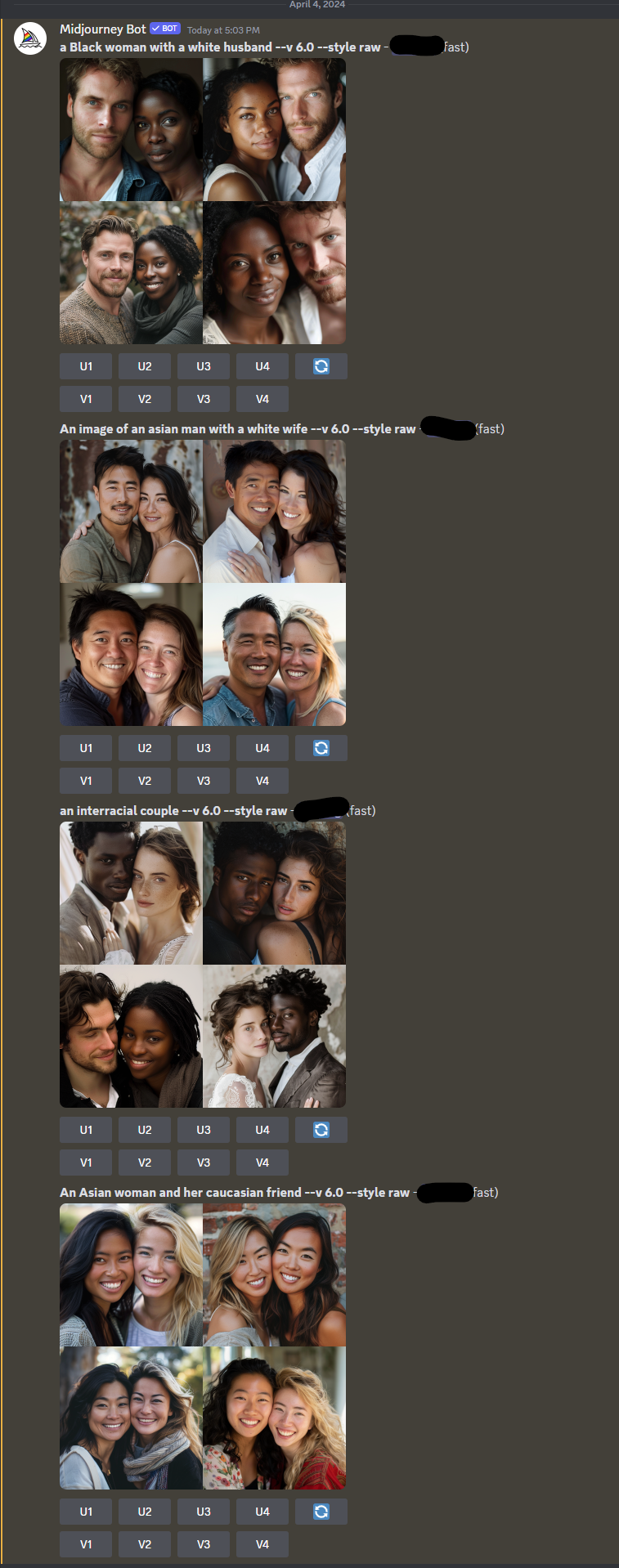

Here is Midjourney’s take on it. No rerolls, no changes to the prompts, just the first thing that came through. This is less an “AI” thing, and more a “Meta” thing for sure.

The faces in the last set were awfully similar in each image. Didn’t understand Caucasian perhaps?

Caucasian = Asians from the Caucasus mountains?

Aka pale looking Asians?